Found 68 repositories(showing 30)

Part-DB

Part-DB is an Open source inventory management system for your electronic components

Nate0634034090

# Ukraine-Cyber-Operations Curated Intelligence is working with analysts from around the world to provide useful information to organisations in Ukraine looking for additional free threat intelligence. Slava Ukraini. Glory to Ukraine. ([Blog](https://www.curatedintel.org/2021/08/welcome.html) | [Twitter](https://twitter.com/CuratedIntel) | [LinkedIn](https://www.linkedin.com/company/curatedintelligence/))   ### Analyst Comments: - 2022-02-25 - Creation of the initial repository to help organisations in Ukraine - Added [Threat Reports](https://github.com/curated-intel/Ukraine-Cyber-Operations#threat-reports) section - Added [Vendor Support](https://github.com/curated-intel/Ukraine-Cyber-Operations#vendor-support) section - 2022-02-26 - Additional resources, chronologically ordered (h/t Orange-CD) - Added [Vetted OSINT Sources](https://github.com/curated-intel/Ukraine-Cyber-Operations#vetted-osint-sources) section - Added [Miscellaneous Resources](https://github.com/curated-intel/Ukraine-Cyber-Operations#miscellaneous-resources) section - 2022-02-27 - Additional threat reports have been added - Added [Data Brokers](https://github.com/curated-intel/Ukraine-Cyber-Operations/blob/main/README.md#data-brokers) section - Added [Access Brokers](https://github.com/curated-intel/Ukraine-Cyber-Operations/blob/main/README.md#access-brokers) section - 2022-02-28 - Added Russian Cyber Operations Against Ukraine Timeline by ETAC - Added Vetted and Contextualized [Indicators of Compromise (IOCs)](https://github.com/curated-intel/Ukraine-Cyber-Operations/blob/main/ETAC_Vetted_UkraineRussiaWar_IOCs.csv) by ETAC - 2022-03-01 - Additional threat reports and resources have been added - 2022-03-02 - Additional [Indicators of Compromise (IOCs)](https://github.com/curated-intel/Ukraine-Cyber-Operations/blob/main/ETAC_Vetted_UkraineRussiaWar_IOCs.csv#L2011) have been added - Added vetted [YARA rule collection](https://github.com/curated-intel/Ukraine-Cyber-Operations/tree/main/yara) from the Threat Reports by ETAC - Added loosely-vetted [IOC Threat Hunt Feeds](https://github.com/curated-intel/Ukraine-Cyber-Operations/tree/main/KPMG-Egyde_Ukraine-Crisis_Feeds/MISP-CSV_MediumConfidence_Filtered) by KPMG-Egyde CTI (h/t [0xDISREL](https://twitter.com/0xDISREL)) - IOCs shared by these feeds are `LOW-TO-MEDIUM CONFIDENCE` we strongly recommend NOT adding them to a blocklist - These could potentially be used for `THREAT HUNTING` and could be added to a `WATCHLIST` - IOCs are generated in `MISP COMPATIBLE` CSV format - 2022-03-03 - Additional threat reports and vendor support resources have been added - Updated [Log4Shell IOC Threat Hunt Feeds](https://github.com/curated-intel/Log4Shell-IOCs/tree/main/KPMG_Log4Shell_Feeds) by KPMG-Egyde CTI; not directly related to Ukraine, but still a widespread vulnerability. - Added diagram of Russia-Ukraine Cyberwar Participants 2022 by ETAC - Additional [Indicators of Compromise (IOCs)](https://github.com/curated-intel/Ukraine-Cyber-Operations/blob/main/ETAC_Vetted_UkraineRussiaWar_IOCs.csv#L2042) have been added #### `Threat Reports` | Date | Source | Threat(s) | URL | | --- | --- | --- | --- | | 14 JAN | SSU Ukraine | Website Defacements | [ssu.gov.ua](https://ssu.gov.ua/novyny/sbu-rozsliduie-prychetnist-rosiiskykh-spetssluzhb-do-sohodnishnoi-kiberataky-na-orhany-derzhavnoi-vlady-ukrainy)| | 15 JAN | Microsoft | WhisperGate wiper (DEV-0586) | [microsoft.com](https://www.microsoft.com/security/blog/2022/01/15/destructive-malware-targeting-ukrainian-organizations/) | | 19 JAN | Elastic | WhisperGate wiper (Operation BleedingBear) | [elastic.github.io](https://elastic.github.io/security-research/malware/2022/01/01.operation-bleeding-bear/article/) | | 31 JAN | Symantec | Gamaredon/Shuckworm/PrimitiveBear (FSB) | [symantec-enterprise-blogs.security.com](https://symantec-enterprise-blogs.security.com/blogs/threat-intelligence/shuckworm-gamaredon-espionage-ukraine) | | 2 FEB | RaidForums | Access broker "GodLevel" offering Ukrainain algricultural exchange | RaidForums [not linked] | | 2 FEB | CERT-UA | UAC-0056 using SaintBot and OutSteel malware | [cert.gov.ua](https://cert.gov.ua/article/18419) | | 3 FEB | PAN Unit42 | Gamaredon/Shuckworm/PrimitiveBear (FSB) | [unit42.paloaltonetworks.com](https://unit42.paloaltonetworks.com/gamaredon-primitive-bear-ukraine-update-2021/) | | 4 FEB | Microsoft | Gamaredon/Shuckworm/PrimitiveBear (FSB) | [microsoft.com](https://www.microsoft.com/security/blog/2022/02/04/actinium-targets-ukrainian-organizations/) | | 8 FEB | NSFOCUS | Lorec53 (aka UAC-0056, EmberBear, BleedingBear) | [nsfocusglobal.com](https://nsfocusglobal.com/apt-retrospection-lorec53-an-active-russian-hack-group-launched-phishing-attacks-against-georgian-government) | | 15 FEB | CERT-UA | DDoS attacks against the name server of government websites as well as Oschadbank (State Savings Bank) & Privatbank (largest commercial bank). False SMS and e-mails to create panic | [cert.gov.ua](https://cert.gov.ua/article/37139) | | 23 FEB | The Daily Beast | Ukrainian troops receive threatening SMS messages | [thedailybeast.com](https://www.thedailybeast.com/cyberattacks-hit-websites-and-psy-ops-sms-messages-targeting-ukrainians-ramp-up-as-russia-moves-into-ukraine) | | 23 FEB | UK NCSC | Sandworm/VoodooBear (GRU) | [ncsc.gov.uk](https://www.ncsc.gov.uk/files/Joint-Sandworm-Advisory.pdf) | | 23 FEB | SentinelLabs | HermeticWiper | [sentinelone.com]( https://www.sentinelone.com/labs/hermetic-wiper-ukraine-under-attack/ ) | | 24 FEB | ESET | HermeticWiper | [welivesecurity.com](https://www.welivesecurity.com/2022/02/24/hermeticwiper-new-data-wiping-malware-hits-ukraine/) | | 24 FEB | Symantec | HermeticWiper, PartyTicket ransomware, CVE-2021-1636, unknown webshell | [symantec-enterprise-blogs.security.com](https://symantec-enterprise-blogs.security.com/blogs/threat-intelligence/ukraine-wiper-malware-russia) | | 24 FEB | Cisco Talos | HermeticWiper | [blog.talosintelligence.com](https://blog.talosintelligence.com/2022/02/threat-advisory-hermeticwiper.html) | | 24 FEB | Zscaler | HermeticWiper | [zscaler.com](https://www.zscaler.com/blogs/security-research/hermetic-wiper-resurgence-targeted-attacks-ukraine) | | 24 FEB | Cluster25 | HermeticWiper | [cluster25.io](https://cluster25.io/2022/02/24/ukraine-analysis-of-the-new-disk-wiping-malware/) | | 24 FEB | CronUp | Data broker "FreeCivilian" offering multiple .gov.ua | [twitter.com/1ZRR4H](https://twitter.com/1ZRR4H/status/1496931721052311557)| | 24 FEB | RaidForums | Data broker "Featherine" offering diia.gov.ua | RaidForums [not linked] | | 24 FEB | DomainTools | Unknown scammers | [twitter.com/SecuritySnacks](https://twitter.com/SecuritySnacks/status/1496956492636905473?s=20&t=KCIX_1Ughc2Fs6Du-Av0Xw) | | 25 FEB | @500mk500 | Gamaredon/Shuckworm/PrimitiveBear (FSB) | [twitter.com/500mk500](https://twitter.com/500mk500/status/1497339266329894920?s=20&t=opOtwpn82ztiFtwUbLkm9Q) | | 25 FEB | @500mk500 | Gamaredon/Shuckworm/PrimitiveBear (FSB) | [twitter.com/500mk500](https://twitter.com/500mk500/status/1497208285472215042)| | 25 FEB | Microsoft | HermeticWiper | [gist.github.com](https://gist.github.com/fr0gger/7882fde2b1b271f9e886a4a9b6fb6b7f) | | 25 FEB | 360 NetLab | DDoS (Mirai, Gafgyt, IRCbot, Ripprbot, Moobot) | [blog.netlab.360.com](https://blog.netlab.360.com/some_details_of_the_ddos_attacks_targeting_ukraine_and_russia_in_recent_days/) | | 25 FEB | Conti [themselves] | Conti ransomware, BazarLoader | Conti News .onion [not linked] | | 25 FEB | CoomingProject [themselves] | Data Hostage Group | CoomingProject Telegram [not linked] | | 25 FEB | CERT-UA | UNC1151/Ghostwriter (Belarus MoD) | [CERT-UA Facebook](https://facebook.com/story.php?story_fbid=312939130865352&id=100064478028712)| | 25 FEB | Sekoia | UNC1151/Ghostwriter (Belarus MoD) | [twitter.com/sekoia_io](https://twitter.com/sekoia_io/status/1497239319295279106) | | 25 FEB | @jaimeblascob | UNC1151/Ghostwriter (Belarus MoD) | [twitter.com/jaimeblasco](https://twitter.com/jaimeblascob/status/1497242668627370009)| | 25 FEB | RISKIQ | UNC1151/Ghostwriter (Belarus MoD) | [community.riskiq.com](https://community.riskiq.com/article/e3a7ceea/) | | 25 FEB | MalwareHunterTeam | Unknown phishing | [twitter.com/malwrhunterteam](https://twitter.com/malwrhunterteam/status/1497235270416097287) | | 25 FEB | ESET | Unknown scammers | [twitter.com/ESETresearch](https://twitter.com/ESETresearch/status/1497194165561659394) | | 25 FEB | BitDefender | Unknown scammers | [blog.bitdefender.com](https://blog.bitdefender.com/blog/hotforsecurity/cybercriminals-deploy-spam-campaign-as-tens-of-thousands-of-ukrainians-seek-refuge-in-neighboring-countries/) | | 25 FEB | SSSCIP Ukraine | Unkown phishing | [twitter.com/dsszzi](https://twitter.com/dsszzi/status/1497103078029291522) | | 25 FEB | RaidForums | Data broker "NetSec" offering FSB (likely SMTP accounts) | RaidForums [not linked] | | 25 FEB | Zscaler | PartyTicket decoy ransomware | [zscaler.com](https://www.zscaler.com/blogs/security-research/technical-analysis-partyticket-ransomware) | | 25 FEB | INCERT GIE | Cyclops Blink, HermeticWiper | [linkedin.com](https://www.linkedin.com/posts/activity-6902989337210740736-XohK) [Login Required] | | 25 FEB | Proofpoint | UNC1151/Ghostwriter (Belarus MoD) | [twitter.com/threatinsight](https://twitter.com/threatinsight/status/1497355737844133895?s=20&t=Ubi0tb_XxGCbHLnUoQVp8w) | | 25 FEB | @fr0gger_ | HermeticWiper capabilities Overview | [twitter.com/fr0gger_](https://twitter.com/fr0gger_/status/1497121876870832128?s=20&t=_296n0bPeUgdXleX02M9mg) | 26 FEB | BBC Journalist | A fake Telegram account claiming to be President Zelensky is posting dubious messages | [twitter.com/shayan86](https://twitter.com/shayan86/status/1497485340738785283?s=21) | | 26 FEB | CERT-UA | UNC1151/Ghostwriter (Belarus MoD) | [CERT_UA Facebook](https://facebook.com/story.php?story_fbid=313517477474184&id=100064478028712) | | 26 FEB | MHT and TRMLabs | Unknown scammers, linked to ransomware | [twitter.com/joes_mcgill](https://twitter.com/joes_mcgill/status/1497609555856932864?s=20&t=KCIX_1Ughc2Fs6Du-Av0Xw) | | 26 FEB | US CISA | WhisperGate wiper, HermeticWiper | [cisa.gov](https://www.cisa.gov/uscert/ncas/alerts/aa22-057a) | | 26 FEB | Bloomberg | Destructive malware (possibly HermeticWiper) deployed at Ukrainian Ministry of Internal Affairs & data stolen from Ukrainian telecommunications networks | [bloomberg.com](https://www.bloomberg.com/news/articles/2022-02-26/hackers-destroyed-data-at-key-ukraine-agency-before-invasion?sref=ylv224K8) | | 26 FEB | Vice Prime Minister of Ukraine | IT ARMY of Ukraine created to crowdsource offensive operations against Russian infrastructure | [twitter.com/FedorovMykhailo](https://twitter.com/FedorovMykhailo/status/1497642156076511233) | | 26 FEB | Yoroi | HermeticWiper | [yoroi.company](https://yoroi.company/research/diskkill-hermeticwiper-a-disruptive-cyber-weapon-targeting-ukraines-critical-infrastructures) | | 27 FEB | LockBit [themselves] | LockBit ransomware | LockBit .onion [not linked] | | 27 FEB | ALPHV [themselves] | ALPHV ransomware | vHUMINT [closed source] | | 27 FEB | Mēris Botnet [themselves] | DDoS attacks | vHUMINT [closed source] | | 28 FEB | Horizon News [themselves] | Leak of China's Censorship Order about Ukraine | [TechARP](https://www-techarp-com.cdn.ampproject.org/c/s/www.techarp.com/internet/chinese-media-leaks-ukraine-censor/?amp=1)| | 28 FEB | Microsoft | FoxBlade (aka HermeticWiper) | [Microsoft](https://blogs.microsoft.com/on-the-issues/2022/02/28/ukraine-russia-digital-war-cyberattacks/?preview_id=65075) | | 28 FEB | @heymingwei | Potential BGP hijacks attempts against Ukrainian Internet Names Center | [https://twitter.com/heymingwei](https://twitter.com/heymingwei/status/1498362715198263300?s=20&t=Ju31gTurYc8Aq_yZMbvbxg) | | 28 FEB | @cyberknow20 | Stormous ransomware targets Ukraine Ministry of Foreign Affairs | [twitter.com/cyberknow20](https://twitter.com/cyberknow20/status/1498434090206314498?s=21) | | 1 MAR | ESET | IsaacWiper and HermeticWizard | [welivesecurity.com](https://www.welivesecurity.com/2022/03/01/isaacwiper-hermeticwizard-wiper-worm-targeting-ukraine/) | | 1 MAR | Proofpoint | Ukrainian armed service member's email compromised and sent malspam containing the SunSeed malware (likely TA445/UNC1151/Ghostwriter) | [proofpoint.com](https://www.proofpoint.com/us/blog/threat-insight/asylum-ambuscade-state-actor-uses-compromised-private-ukrainian-military-emails) | | 1 MAR | Elastic | HermeticWiper | [elastic.github.io](https://elastic.github.io/security-research/intelligence/2022/03/01.hermeticwiper-targets-ukraine/article/) | | 1 MAR | CrowdStrike | PartyTicket (aka HermeticRansom), DriveSlayer (aka HermeticWiper) | [CrowdStrike](https://www.crowdstrike.com/blog/how-to-decrypt-the-partyticket-ransomware-targeting-ukraine/) | | 2 MAR | Zscaler | DanaBot operators launch DDoS attacks against the Ukrainian Ministry of Defense | [zscaler.com](https://www.zscaler.com/blogs/security-research/danabot-launches-ddos-attack-against-ukrainian-ministry-defense) | | 3 MAR | @ShadowChasing1 | Gamaredon/Shuckworm/PrimitiveBear (FSB) | [twitter.com/ShadowChasing1](https://twitter.com/ShadowChasing1/status/1499361093059153921) | | 3 MAR | @vxunderground | News website in Poland was reportedly compromised and the threat actor uploaded anti-Ukrainian propaganda | [twitter.com/vxunderground](https://twitter.com/vxunderground/status/1499374914758918151?s=20&t=jyy9Hnpzy-5P1gcx19bvIA) | | 3 MAR | @kylaintheburgh | Russian botnet on Twitter is pushing "#istandwithputin" and "#istandwithrussia" propaganda (in English) | [twitter.com/kylaintheburgh](https://twitter.com/kylaintheburgh/status/1499350578371067906?s=21) | | 3 MAR | @tracerspiff | UNC1151/Ghostwriter (Belarus MoD) | [twitter.com](https://twitter.com/tracerspiff/status/1499444876810854408?s=21) | #### `Access Brokers` | Date | Threat(s) | Source | | --- | --- | --- | | 23 JAN | Access broker "Mont4na" offering UkrFerry | RaidForums [not linked] | | 23 JAN | Access broker "Mont4na" offering PrivatBank | RaidForums [not linked] | | 24 JAN | Access broker "Mont4na" offering DTEK | RaidForums [not linked] | | 27 FEB | KelvinSecurity Sharing list of IP cameras in Ukraine | vHUMINT [closed source] | | 28 FEB | "w1nte4mute" looking to buy access to UA and NATO countries (likely ransomware affiliate) | vHUMINT [closed source] | #### `Data Brokers` | Threat Actor | Type | Observation | Validated | Relevance | Source | | --------------- | --------------- | --------------------------------------------------------------------------------------------------------- | --------- | ----------------------------- | ---------------------------------------------------------- | | aguyinachair | UA data sharing | PII DB of ukraine.com (shared as part of a generic compilation) | No | TA discussion in past 90 days | ELeaks Forum \[not linked\] | | an3key | UA data sharing | DB of Ministry of Communities and Territories Development of Ukraine (minregion\[.\]gov\[.\]ua) | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | an3key | UA data sharing | DB of Ukrainian Ministry of Internal Affairs (wanted\[.\]mvs\[.\]gov\[.\]ua) | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | CorelDraw | UA data sharing | PII DB (40M) of PrivatBank customers (privatbank\[.\]ua) | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | CorelDraw | UA data sharing | DB of "border crossing" DBs of DPR and LPR | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | CorelDraw | UA data sharing | PII DB (7.5M) of Ukrainian passports | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | CorelDraw | UA data sharing | PII DB of Ukrainian car registration, license plates, Ukrainian traffic police records | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | CorelDraw | UA data sharing | PII DB (2.1M) of Ukrainian citizens | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | CorelDraw | UA data sharing | PII DB (28M) of Ukrainian citizens (passports, drivers licenses, photos) | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | CorelDraw | UA data sharing | PII DB (1M) of Ukrainian postal/courier service customers (novaposhta\[.\]ua) | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | CorelDraw | UA data sharing | PII DB (10M) of Ukrainian telecom customers (vodafone\[.\]ua) | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | CorelDraw | UA data sharing | PII DB (3M) of Ukrainian telecom customers (lifecell\[.\]ua) | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | CorelDraw | UA data sharing | PII DB (13M) of Ukrainian telecom customers (kyivstar\[.\]ua) | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | danieltx51 | UA data sharing | DB of Ministry of Foreign Affairs of Ukraine (mfa\[.\]gov\[.\]ua) | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | DueDiligenceCIS | UA data sharing | PII DB (63M) of Ukrainian citizens (name, DOB, birth country, phone, TIN, passport, family, etc) | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | Featherine | UA data sharing | DB of Ukrainian 'Diia' e-Governance Portal for Ministry of Digital Transformation of Ukraine | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | FreeCivilian | UA data sharing | DB of Ministry for Internal Affairs of Ukraine public data search engine (wanted\[.\]mvs\[.\]gov\[.\]ua) | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | FreeCivilian | UA data sharing | DB of Ministry for Communities and Territories Development of Ukraine (minregion\[.\]gov\[.\]ua) | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | FreeCivilian | UA data sharing | DB of Motor Insurance Bureau of Ukraine (mtsbu\[.\]ua) | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | FreeCivilian | UA data sharing | PII DB of Ukrainian digital-medicine provider (medstar\[.\]ua) | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | FreeCivilian | UA data sharing | DB of ticket.kyivcity.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of id.kyivcity.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of my.kyivcity.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of portal.kyivcity.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of anti-violence-map.msp.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of dopomoga.msp.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of e-services.msp.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of edu.msp.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of education.msp.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of ek-cbi.msp.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of mail.msp.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of portal-gromady.msp.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of web-minsoc.msp.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of wcs-wim.dsbt.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of bdr.mvs.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of motorsich.com | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of dsns.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of mon.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of minagro.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of zt.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of kmu.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of mvs.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of dsbt.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of forest.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of nkrzi.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of dabi.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of comin.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of dp.dpss.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of esbu.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of mms.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of mova.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of mspu.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of nads.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of reintegration.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of sies.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of sport.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of mepr.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of mfa.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of va.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of mtu.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of cg.mvs.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of ch-tmo.mvs.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of cp.mvs.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of cpd.mvs.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of hutirvilnij-mrc.mvs.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of dndekc.mvs.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of visnyk.dndekc.mvs.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of dpvs.hsc.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of odk.mvs.gov.ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of e-driver\[.\]hsc\[.\]gov\[.\]ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of wanted\[.\]mvs\[.\]gov\[.\]ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of minregeion\[.\]gov\[.\]ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of health\[.\]mia\[.\]solutions | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of mtsbu\[.\]ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of motorsich\[.\]com | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of kyivcity\[.\]com | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of bdr\[.\]mvs\[.\]gov\[.\]ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of gkh\[.\]in\[.\]ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of kmu\[.\]gov\[.\]ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of mon\[.\]gov\[.\]ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of minagro\[.\]gov\[.\]ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | FreeCivilian | UA data sharing | DB of mfa\[.\]gov\[.\]ua | No | TA discussion in past 90 days | FreeCivilian .onion \[not linked\] | | Intel\_Data | UA data sharing | PII DB (56M) of Ukrainian Citizens | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | Kristina | UA data sharing | DB of Ukrainian National Police (mvs\[.\]gov\[.\]ua) | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | NetSec | UA data sharing | PII DB (53M) of Ukrainian citizens | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | Psycho\_Killer | UA data sharing | PII DB (56M) of Ukrainian Citizens | No | TA discussion in past 90 days | Exploit Forum .onion \[not linked\] | | Sp333 | UA data sharing | PII DB of Ukrainian and Russian interpreters, translators, and tour guides | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | Vaticano | UA data sharing | DB of Ukrainian 'Diia' e-Governance Portal for Ministry of Digital Transformation of Ukraine \[copy\] | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | | Vaticano | UA data sharing | DB of Ministry for Communities and Territories Development of Ukraine (minregion\[.\]gov\[.\]ua) \[copy\] | No | TA discussion in past 90 days | RaidForums \[not linked; site hijacked since UA invasion\] | #### `Vendor Support` | Vendor | Offering | URL | | --- | --- | --- | | Dragos | Access to Dragos service if from US/UK/ANZ and in need of ICS cybersecurity support | [twitter.com/RobertMLee](https://twitter.com/RobertMLee/status/1496862093588455429) | | GreyNoise | Any and all `Ukrainian` emails registered to GreyNoise have been upgraded to VIP which includes full, uncapped enterprise access to all GreyNoise products | [twitter.com/Andrew___Morris](https://twitter.com/Andrew___Morris/status/1496923545712091139) | | Recorded Future | Providing free intelligence-driven insights, perspectives, and mitigation strategies as the situation in Ukraine evolves| [recordedfuture.com](https://www.recordedfuture.com/ukraine/) | | Flashpoint | Free Access to Flashpoint’s Latest Threat Intel on Ukraine | [go.flashpoint-intel.com](https://go.flashpoint-intel.com/trial/access/30days) | | ThreatABLE | A Ukraine tag for free threat intelligence feed that's more highly curated to cyber| [twitter.com/threatable](https://twitter.com/threatable/status/1497233721803644950) | | Orange | IOCs related to Russia-Ukraine 2022 conflict extracted from our Datalake Threat Intelligence platform. | [github.com/Orange-Cyberdefense](https://github.com/Orange-Cyberdefense/russia-ukraine_IOCs)| | FSecure | F-Secure FREEDOME VPN is now available for free in all of Ukraine | [twitter.com/FSecure](https://twitter.com/FSecure/status/1497248407303462960) | | Multiple vendors | List of vendors offering their services to Ukraine for free, put together by [@chrisculling](https://twitter.com/chrisculling/status/1497023038323404803) | [docs.google.com/spreadsheets](https://docs.google.com/spreadsheets/d/18WYY9p1_DLwB6dnXoiiOAoWYD8X0voXtoDl_ZQzjzUQ/edit#gid=0) | | Mandiant | Free threat intelligence, webinar and guidance for defensive measures relevant to the situation in Ukraine. | [mandiant.com](https://www.mandiant.com/resources/insights/ukraine-crisis-resource-center) | | Starlink | Satellite internet constellation operated by SpaceX providing satellite Internet access coverage to Ukraine | [twitter.com/elonmusk](https://twitter.com/elonmusk/status/1497701484003213317) | | Romania DNSC | Romania’s DNSC – in partnership with Bitdefender – will provide technical consulting, threat intelligence and, free of charge, cybersecurity technology to any business, government institution or private citizen of Ukraine for as long as it is necessary. | [Romania's DNSC Press Release](https://dnsc.ro/citeste/press-release-dnsc-and-bitdefender-work-together-in-support-of-ukraine)| | BitDefender | Access to Bitdefender technical consulting, threat intelligence and both consumer and enterprise cybersecurity technology | [bitdefender.com/ukraine/](https://www.bitdefender.com/ukraine/) | | NameCheap | Free anonymous hosting and domain name registration to any anti-Putin anti-regime and protest websites for anyone located within Russia and Belarus | [twitter.com/Namecheap](https://twitter.com/Namecheap/status/1498998414020861953) | | Avast | Free decryptor for PartyTicket ransomware | [decoded.avast.io](https://decoded.avast.io/threatresearch/help-for-ukraine-free-decryptor-for-hermeticransom-ransomware/) | #### `Vetted OSINT Sources` | Handle | Affiliation | | --- | --- | | [@KyivIndependent](https://twitter.com/KyivIndependent) | English-language journalism in Ukraine | | [@IAPonomarenko](https://twitter.com/IAPonomarenko) | Defense reporter with The Kyiv Independent | | [@KyivPost](https://twitter.com/KyivPost) | English-language journalism in Ukraine | | [@Shayan86](https://twitter.com/Shayan86) | BBC World News Disinformation journalist | | [@Liveuamap](https://twitter.com/Liveuamap) | Live Universal Awareness Map (“Liveuamap”) independent global news and information site | | [@DAlperovitch](https://twitter.com/DAlperovitch) | The Alperovitch Institute for Cybersecurity Studies, Founder & Former CTO of CrowdStrike | | [@COUPSURE](https://twitter.com/COUPSURE) | OSINT investigator for Centre for Information Resilience | | [@netblocks](https://twitter.com/netblocks) | London-based Internet's Observatory | #### `Miscellaneous Resources` | Source | URL | Content | | --- | --- | --- | | PowerOutages.com | https://poweroutage.com/ua | Tracking PowerOutages across Ukraine | | Monash IP Observatory | https://twitter.com/IP_Observatory | Tracking IP address outages across Ukraine | | Project Owl Discord | https://discord.com/invite/projectowl | Tracking foreign policy, geopolitical events, military and governments, using a Discord-based crowdsourced approach, with a current emphasis on Ukraine and Russia | | russianwarchatter.info | https://www.russianwarchatter.info/ | Known Russian Military Radio Frequencies |

TLeconte

server part for acarsdec and vdlm2dec : receive acars in json format and store them in sqllite db

HlaingPhyoAung

Usage: python sqlmap.py [options] Options: -h, --help Show basic help message and exit -hh Show advanced help message and exit --version Show program's version number and exit -v VERBOSE Verbosity level: 0-6 (default 1) Target: At least one of these options has to be provided to define the target(s) -d DIRECT Connection string for direct database connection -u URL, --url=URL Target URL (e.g. "http://www.site.com/vuln.php?id=1") -l LOGFILE Parse target(s) from Burp or WebScarab proxy log file -x SITEMAPURL Parse target(s) from remote sitemap(.xml) file -m BULKFILE Scan multiple targets given in a textual file -r REQUESTFILE Load HTTP request from a file -g GOOGLEDORK Process Google dork results as target URLs -c CONFIGFILE Load options from a configuration INI file Request: These options can be used to specify how to connect to the target URL --method=METHOD Force usage of given HTTP method (e.g. PUT) --data=DATA Data string to be sent through POST --param-del=PARA.. Character used for splitting parameter values --cookie=COOKIE HTTP Cookie header value --cookie-del=COO.. Character used for splitting cookie values --load-cookies=L.. File containing cookies in Netscape/wget format --drop-set-cookie Ignore Set-Cookie header from response --user-agent=AGENT HTTP User-Agent header value --random-agent Use randomly selected HTTP User-Agent header value --host=HOST HTTP Host header value --referer=REFERER HTTP Referer header value -H HEADER, --hea.. Extra header (e.g. "X-Forwarded-For: 127.0.0.1") --headers=HEADERS Extra headers (e.g. "Accept-Language: fr\nETag: 123") --auth-type=AUTH.. HTTP authentication type (Basic, Digest, NTLM or PKI) --auth-cred=AUTH.. HTTP authentication credentials (name:password) --auth-file=AUTH.. HTTP authentication PEM cert/private key file --ignore-401 Ignore HTTP Error 401 (Unauthorized) --proxy=PROXY Use a proxy to connect to the target URL --proxy-cred=PRO.. Proxy authentication credentials (name:password) --proxy-file=PRO.. Load proxy list from a file --ignore-proxy Ignore system default proxy settings --tor Use Tor anonymity network --tor-port=TORPORT Set Tor proxy port other than default --tor-type=TORTYPE Set Tor proxy type (HTTP (default), SOCKS4 or SOCKS5) --check-tor Check to see if Tor is used properly --delay=DELAY Delay in seconds between each HTTP request --timeout=TIMEOUT Seconds to wait before timeout connection (default 30) --retries=RETRIES Retries when the connection timeouts (default 3) --randomize=RPARAM Randomly change value for given parameter(s) --safe-url=SAFEURL URL address to visit frequently during testing --safe-post=SAFE.. POST data to send to a safe URL --safe-req=SAFER.. Load safe HTTP request from a file --safe-freq=SAFE.. Test requests between two visits to a given safe URL --skip-urlencode Skip URL encoding of payload data --csrf-token=CSR.. Parameter used to hold anti-CSRF token --csrf-url=CSRFURL URL address to visit to extract anti-CSRF token --force-ssl Force usage of SSL/HTTPS --hpp Use HTTP parameter pollution method --eval=EVALCODE Evaluate provided Python code before the request (e.g. "import hashlib;id2=hashlib.md5(id).hexdigest()") Optimization: These options can be used to optimize the performance of sqlmap -o Turn on all optimization switches --predict-output Predict common queries output --keep-alive Use persistent HTTP(s) connections --null-connection Retrieve page length without actual HTTP response body --threads=THREADS Max number of concurrent HTTP(s) requests (default 1) Injection: These options can be used to specify which parameters to test for, provide custom injection payloads and optional tampering scripts -p TESTPARAMETER Testable parameter(s) --skip=SKIP Skip testing for given parameter(s) --skip-static Skip testing parameters that not appear dynamic --dbms=DBMS Force back-end DBMS to this value --dbms-cred=DBMS.. DBMS authentication credentials (user:password) --os=OS Force back-end DBMS operating system to this value --invalid-bignum Use big numbers for invalidating values --invalid-logical Use logical operations for invalidating values --invalid-string Use random strings for invalidating values --no-cast Turn off payload casting mechanism --no-escape Turn off string escaping mechanism --prefix=PREFIX Injection payload prefix string --suffix=SUFFIX Injection payload suffix string --tamper=TAMPER Use given script(s) for tampering injection data Detection: These options can be used to customize the detection phase --level=LEVEL Level of tests to perform (1-5, default 1) --risk=RISK Risk of tests to perform (1-3, default 1) --string=STRING String to match when query is evaluated to True --not-string=NOT.. String to match when query is evaluated to False --regexp=REGEXP Regexp to match when query is evaluated to True --code=CODE HTTP code to match when query is evaluated to True --text-only Compare pages based only on the textual content --titles Compare pages based only on their titles Techniques: These options can be used to tweak testing of specific SQL injection techniques --technique=TECH SQL injection techniques to use (default "BEUSTQ") --time-sec=TIMESEC Seconds to delay the DBMS response (default 5) --union-cols=UCOLS Range of columns to test for UNION query SQL injection --union-char=UCHAR Character to use for bruteforcing number of columns --union-from=UFROM Table to use in FROM part of UNION query SQL injection --dns-domain=DNS.. Domain name used for DNS exfiltration attack --second-order=S.. Resulting page URL searched for second-order response Fingerprint: -f, --fingerprint Perform an extensive DBMS version fingerprint Enumeration: These options can be used to enumerate the back-end database management system information, structure and data contained in the tables. Moreover you can run your own SQL statements -a, --all Retrieve everything -b, --banner Retrieve DBMS banner --current-user Retrieve DBMS current user --current-db Retrieve DBMS current database --hostname Retrieve DBMS server hostname --is-dba Detect if the DBMS current user is DBA --users Enumerate DBMS users --passwords Enumerate DBMS users password hashes --privileges Enumerate DBMS users privileges --roles Enumerate DBMS users roles --dbs Enumerate DBMS databases --tables Enumerate DBMS database tables --columns Enumerate DBMS database table columns --schema Enumerate DBMS schema --count Retrieve number of entries for table(s) --dump Dump DBMS database table entries --dump-all Dump all DBMS databases tables entries --search Search column(s), table(s) and/or database name(s) --comments Retrieve DBMS comments -D DB DBMS database to enumerate -T TBL DBMS database table(s) to enumerate -C COL DBMS database table column(s) to enumerate -X EXCLUDECOL DBMS database table column(s) to not enumerate -U USER DBMS user to enumerate --exclude-sysdbs Exclude DBMS system databases when enumerating tables --pivot-column=P.. Pivot column name --where=DUMPWHERE Use WHERE condition while table dumping --start=LIMITSTART First query output entry to retrieve --stop=LIMITSTOP Last query output entry to retrieve --first=FIRSTCHAR First query output word character to retrieve --last=LASTCHAR Last query output word character to retrieve --sql-query=QUERY SQL statement to be executed --sql-shell Prompt for an interactive SQL shell --sql-file=SQLFILE Execute SQL statements from given file(s) Brute force: These options can be used to run brute force checks --common-tables Check existence of common tables --common-columns Check existence of common columns User-defined function injection: These options can be used to create custom user-defined functions --udf-inject Inject custom user-defined functions --shared-lib=SHLIB Local path of the shared library File system access: These options can be used to access the back-end database management system underlying file system --file-read=RFILE Read a file from the back-end DBMS file system --file-write=WFILE Write a local file on the back-end DBMS file system --file-dest=DFILE Back-end DBMS absolute filepath to write to Operating system access: These options can be used to access the back-end database management system underlying operating system --os-cmd=OSCMD Execute an operating system command --os-shell Prompt for an interactive operating system shell --os-pwn Prompt for an OOB shell, Meterpreter or VNC --os-smbrelay One click prompt for an OOB shell, Meterpreter or VNC --os-bof Stored procedure buffer overflow exploitation --priv-esc Database process user privilege escalation --msf-path=MSFPATH Local path where Metasploit Framework is installed --tmp-path=TMPPATH Remote absolute path of temporary files directory Windows registry access: These options can be used to access the back-end database management system Windows registry --reg-read Read a Windows registry key value --reg-add Write a Windows registry key value data --reg-del Delete a Windows registry key value --reg-key=REGKEY Windows registry key --reg-value=REGVAL Windows registry key value --reg-data=REGDATA Windows registry key value data --reg-type=REGTYPE Windows registry key value type General: These options can be used to set some general working parameters -s SESSIONFILE Load session from a stored (.sqlite) file -t TRAFFICFILE Log all HTTP traffic into a textual file --batch Never ask for user input, use the default behaviour --binary-fields=.. Result fields having binary values (e.g. "digest") --charset=CHARSET Force character encoding used for data retrieval --crawl=CRAWLDEPTH Crawl the website starting from the target URL --crawl-exclude=.. Regexp to exclude pages from crawling (e.g. "logout") --csv-del=CSVDEL Delimiting character used in CSV output (default ",") --dump-format=DU.. Format of dumped data (CSV (default), HTML or SQLITE) --eta Display for each output the estimated time of arrival --flush-session Flush session files for current target --forms Parse and test forms on target URL --fresh-queries Ignore query results stored in session file --hex Use DBMS hex function(s) for data retrieval --output-dir=OUT.. Custom output directory path --parse-errors Parse and display DBMS error messages from responses --save=SAVECONFIG Save options to a configuration INI file --scope=SCOPE Regexp to filter targets from provided proxy log --test-filter=TE.. Select tests by payloads and/or titles (e.g. ROW) --test-skip=TEST.. Skip tests by payloads and/or titles (e.g. BENCHMARK) --update Update sqlmap Miscellaneous: -z MNEMONICS Use short mnemonics (e.g. "flu,bat,ban,tec=EU") --alert=ALERT Run host OS command(s) when SQL injection is found --answers=ANSWERS Set question answers (e.g. "quit=N,follow=N") --beep Beep on question and/or when SQL injection is found --cleanup Clean up the DBMS from sqlmap specific UDF and tables --dependencies Check for missing (non-core) sqlmap dependencies --disable-coloring Disable console output coloring --gpage=GOOGLEPAGE Use Google dork results from specified page number --identify-waf Make a thorough testing for a WAF/IPS/IDS protection --skip-waf Skip heuristic detection of WAF/IPS/IDS protection --mobile Imitate smartphone through HTTP User-Agent header --offline Work in offline mode (only use session data) --page-rank Display page rank (PR) for Google dork results --purge-output Safely remove all content from output directory --smart Conduct thorough tests only if positive heuristic(s) --sqlmap-shell Prompt for an interactive sqlmap shell --wizard Simple wizard interface for beginner users

ModelContextProtocol-Security

mcpserver-audit: Helps you check if MCP servers are safe before using them. Examines servers for security problems, supports publishing findings in audit-db and vulnerability-db. Part of the Model Context Protocol Security initiative, a Cloud Security Alliance project.

AnimeshMondol

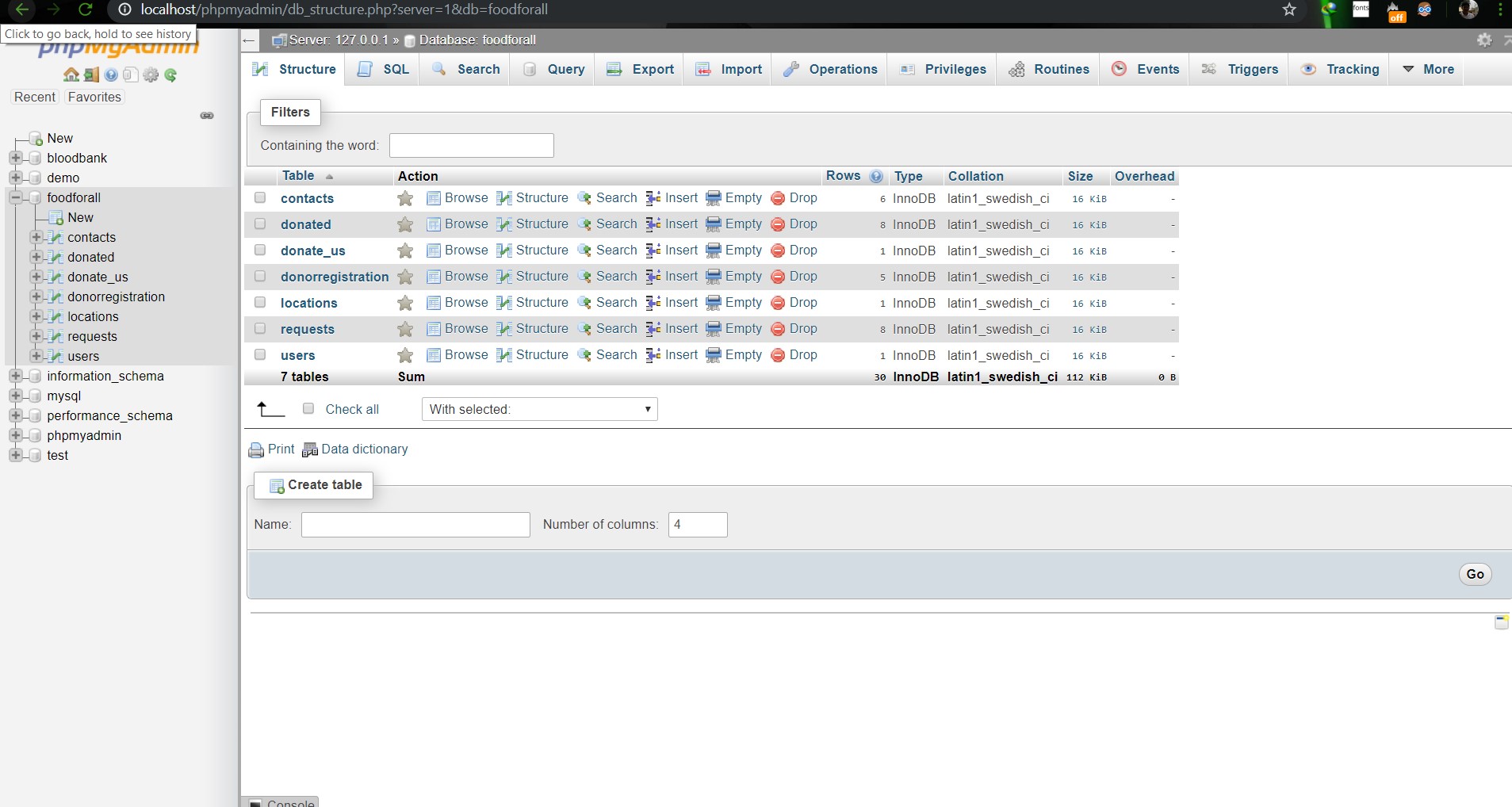

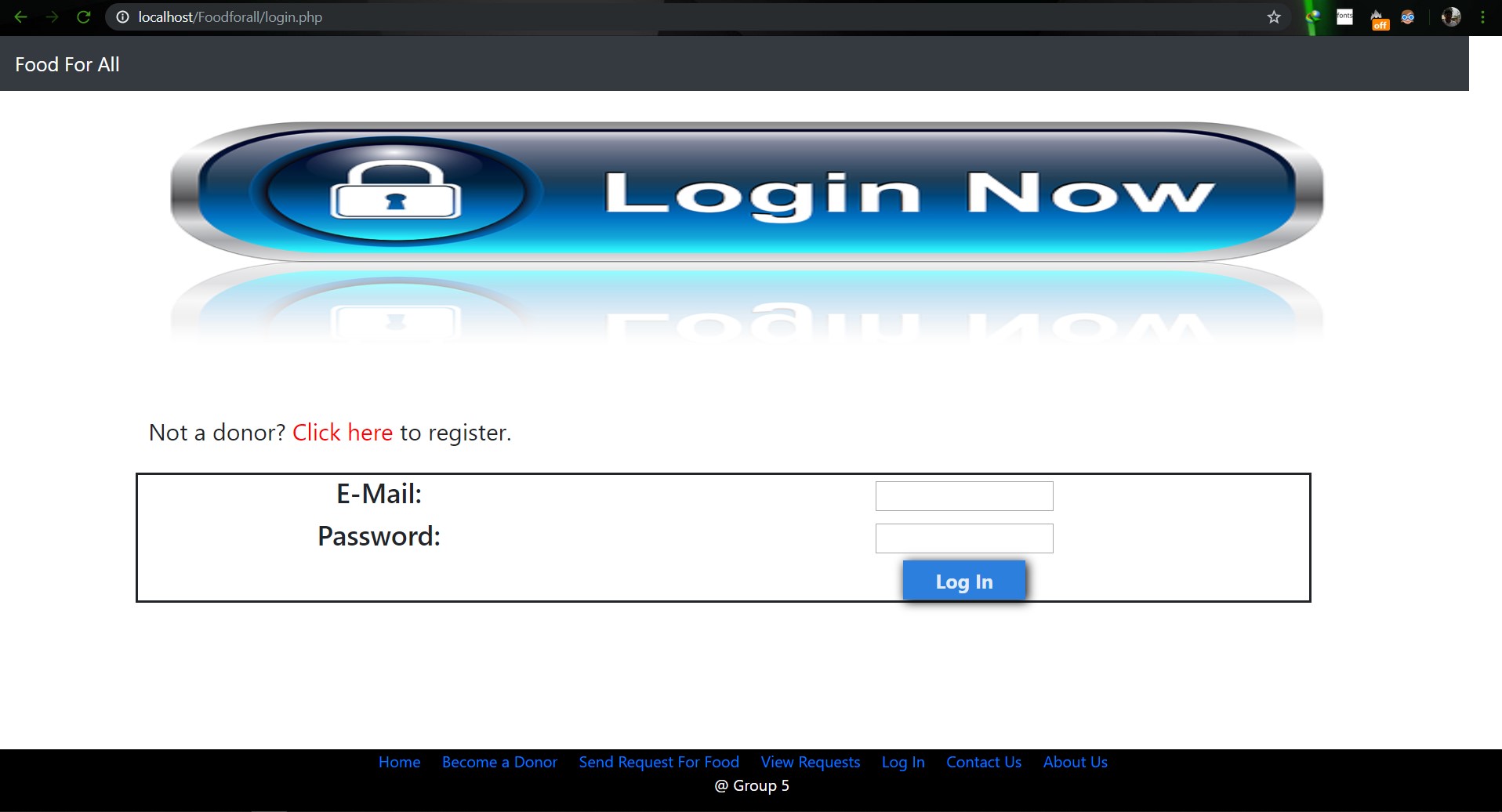

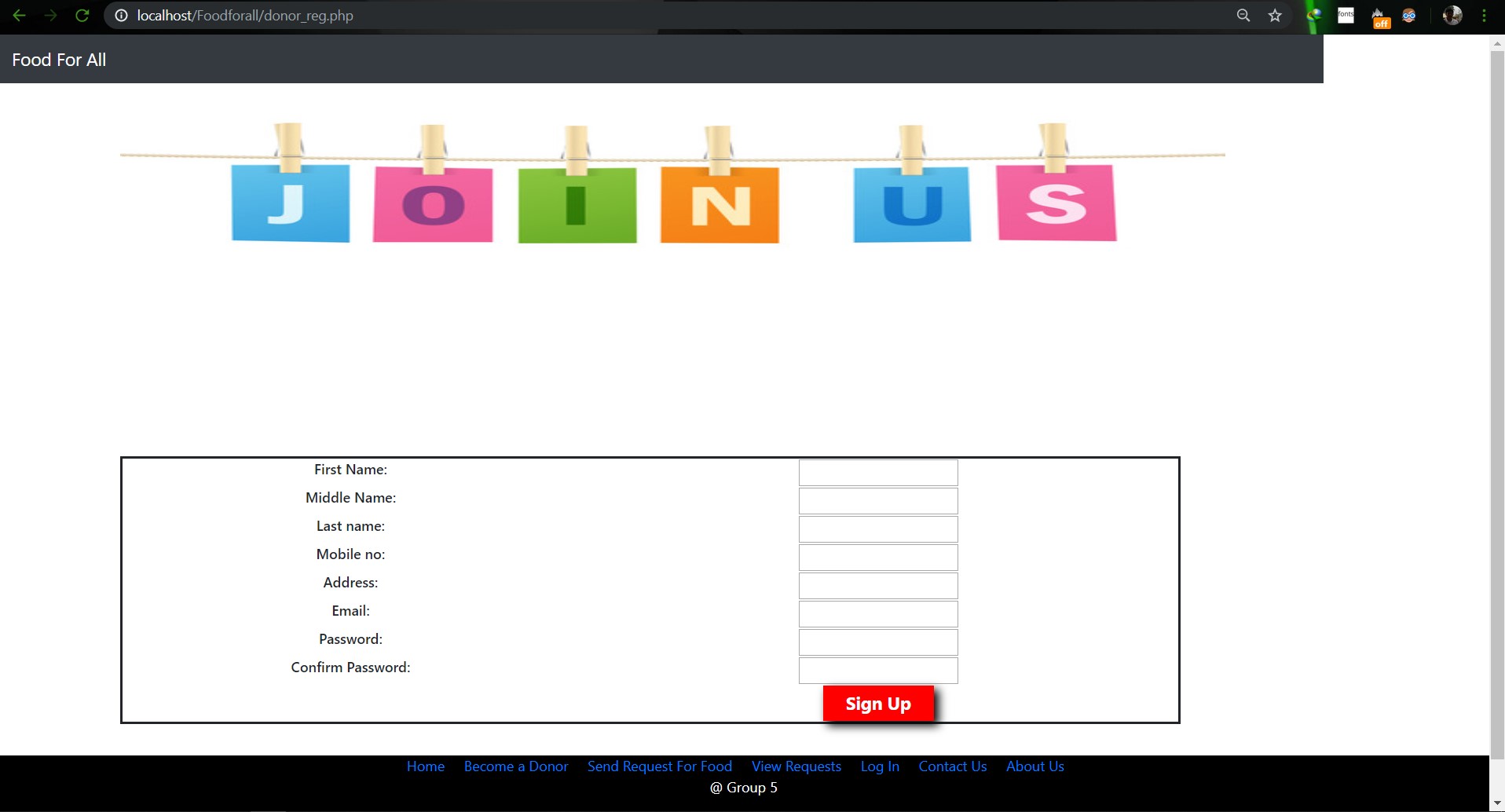

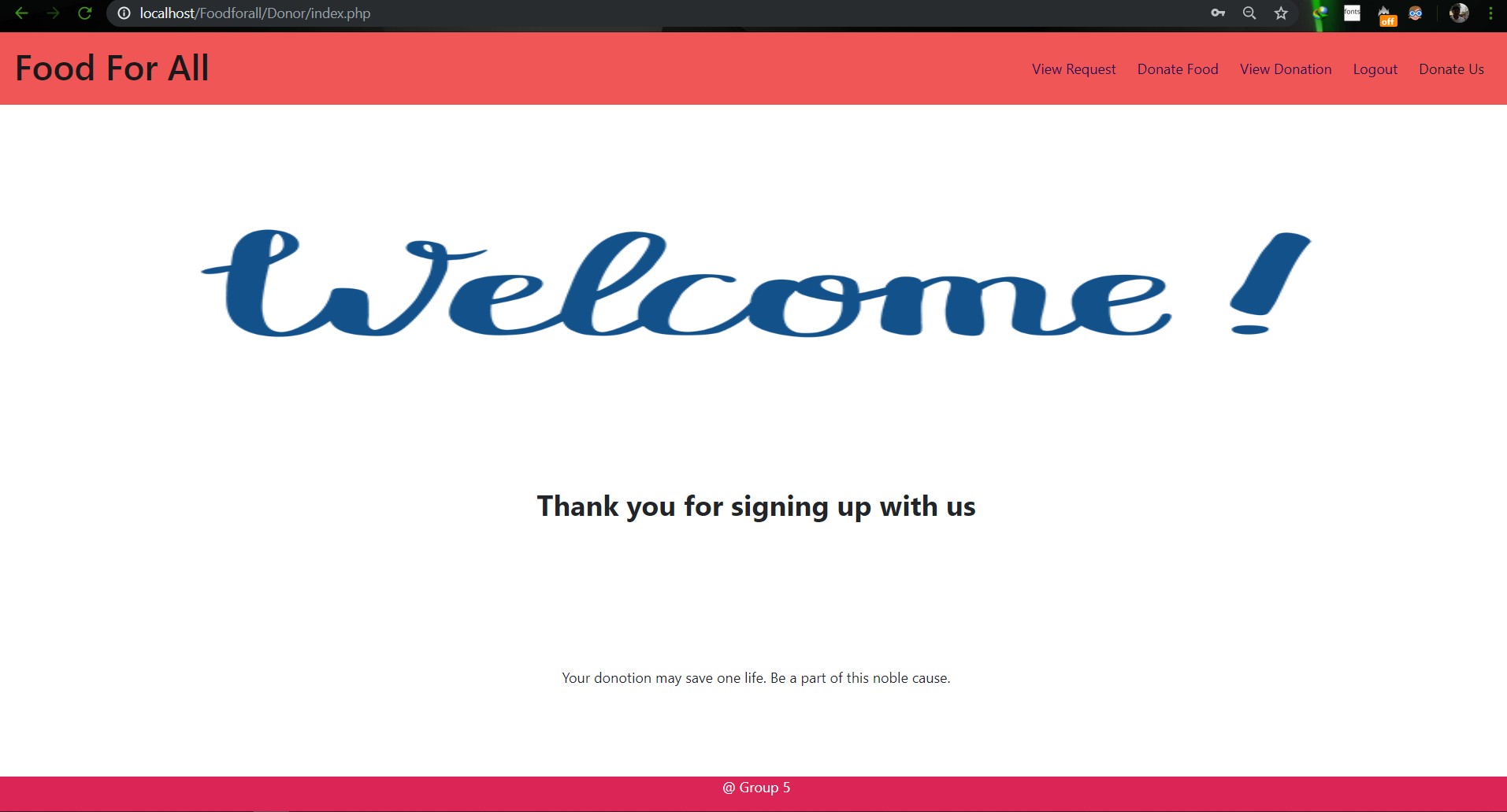

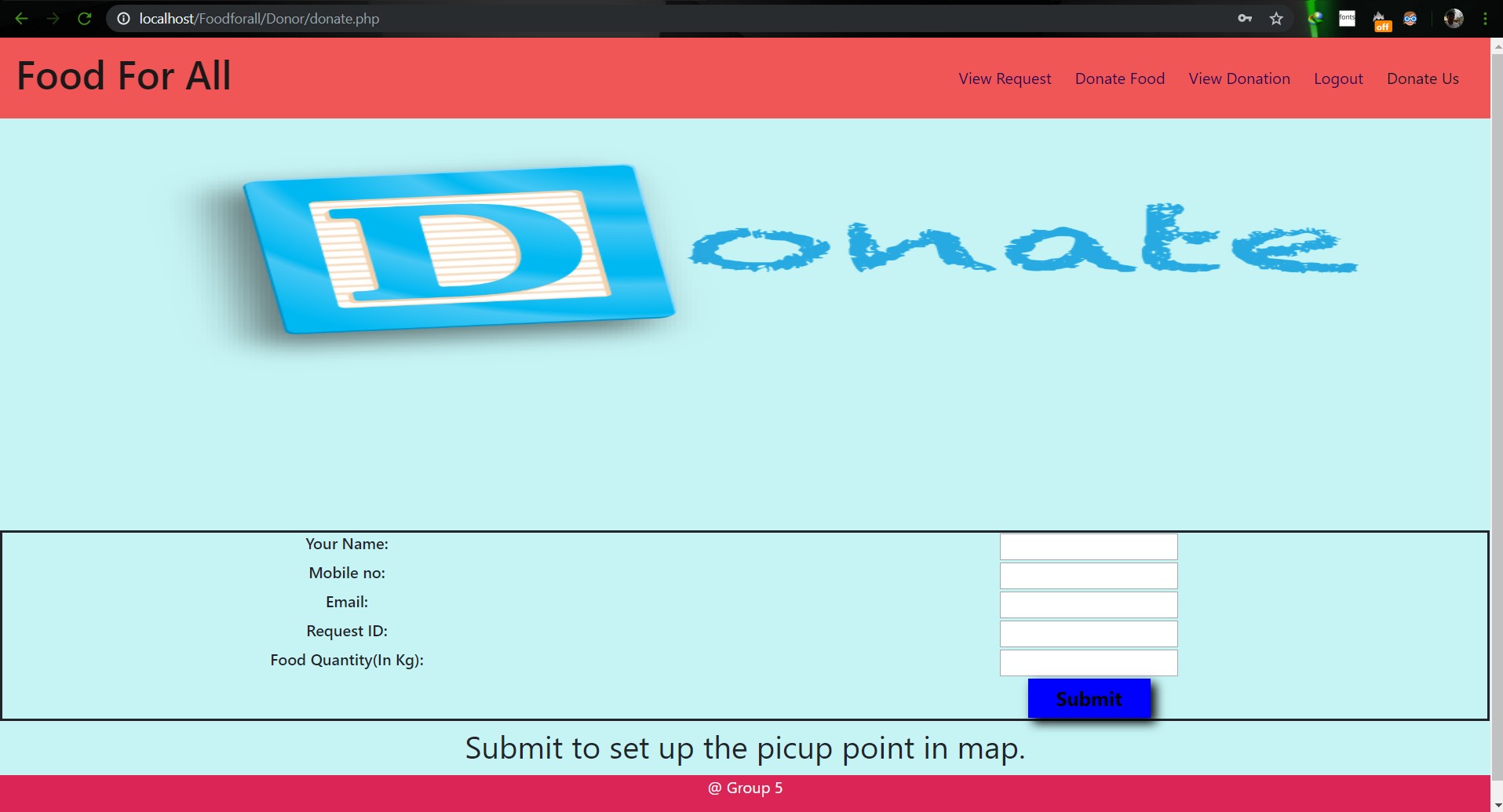

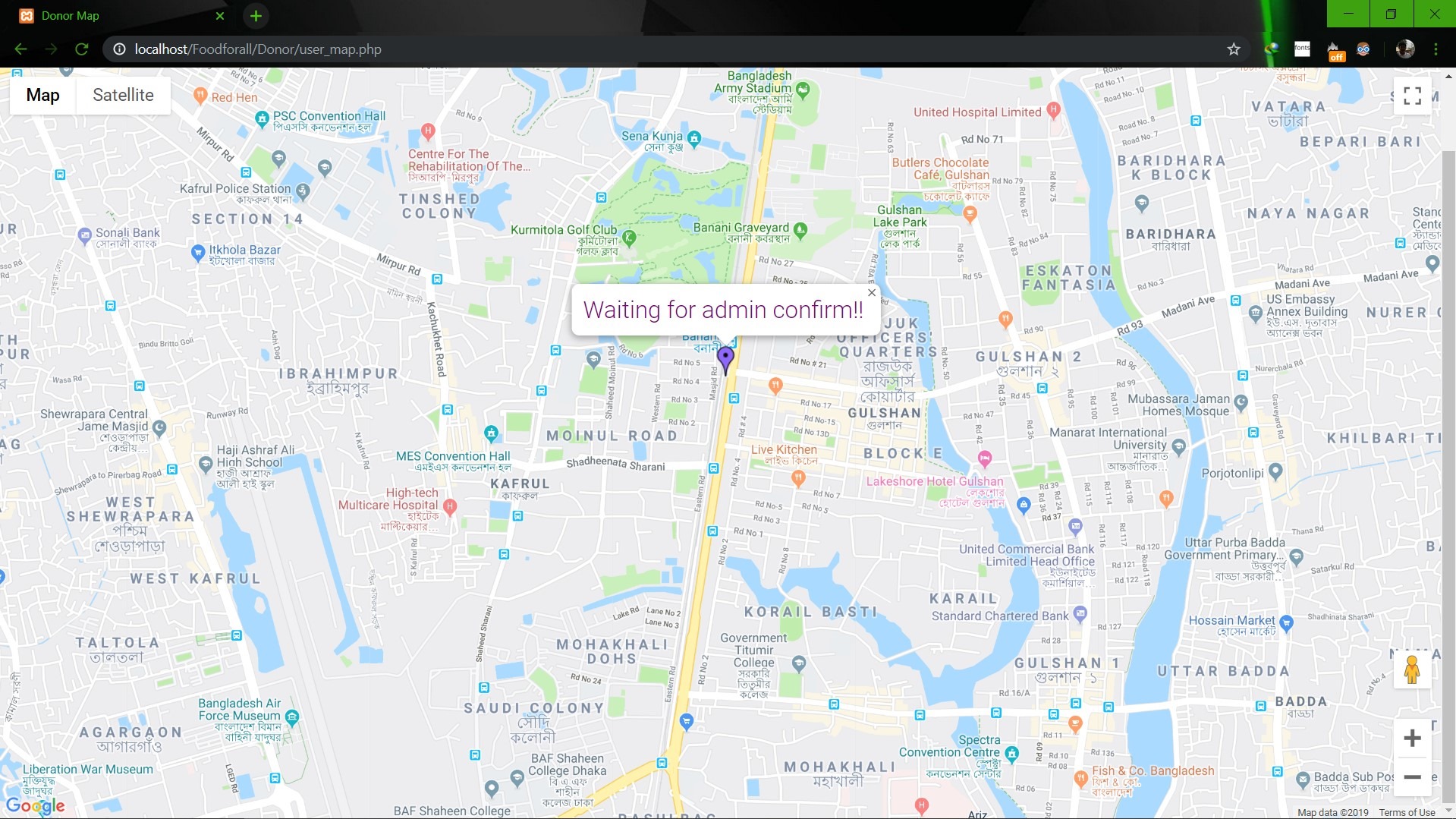

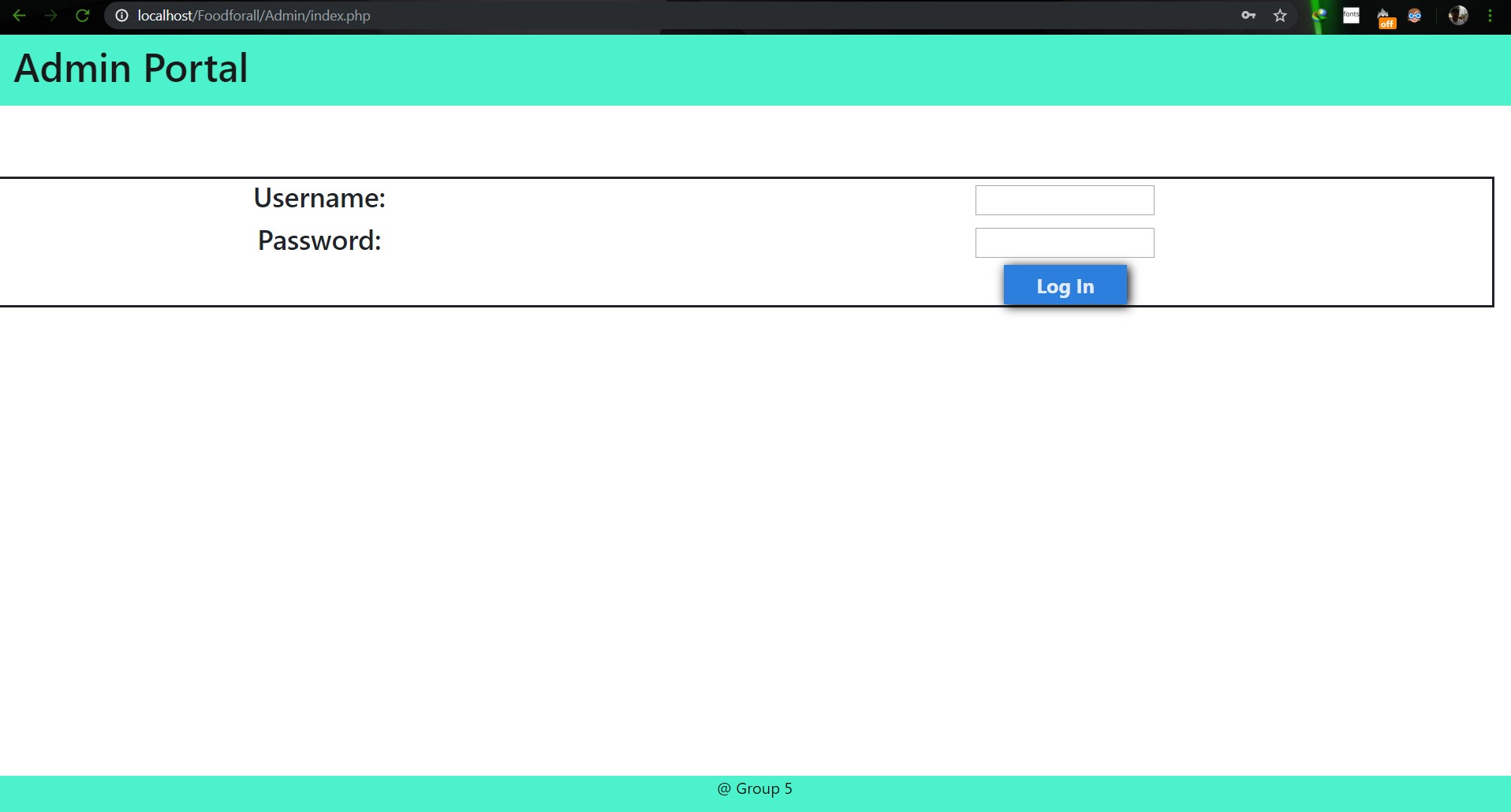

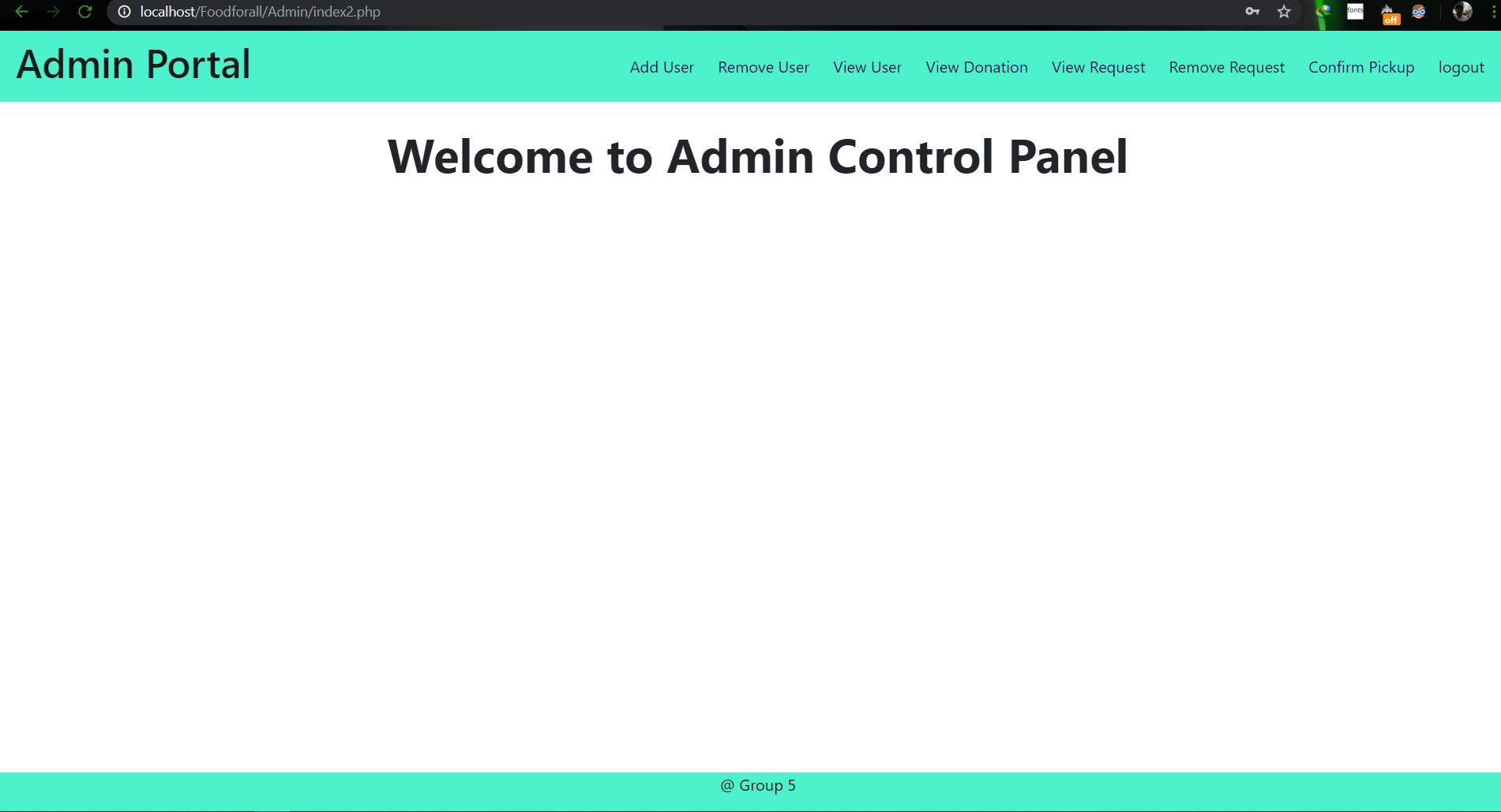

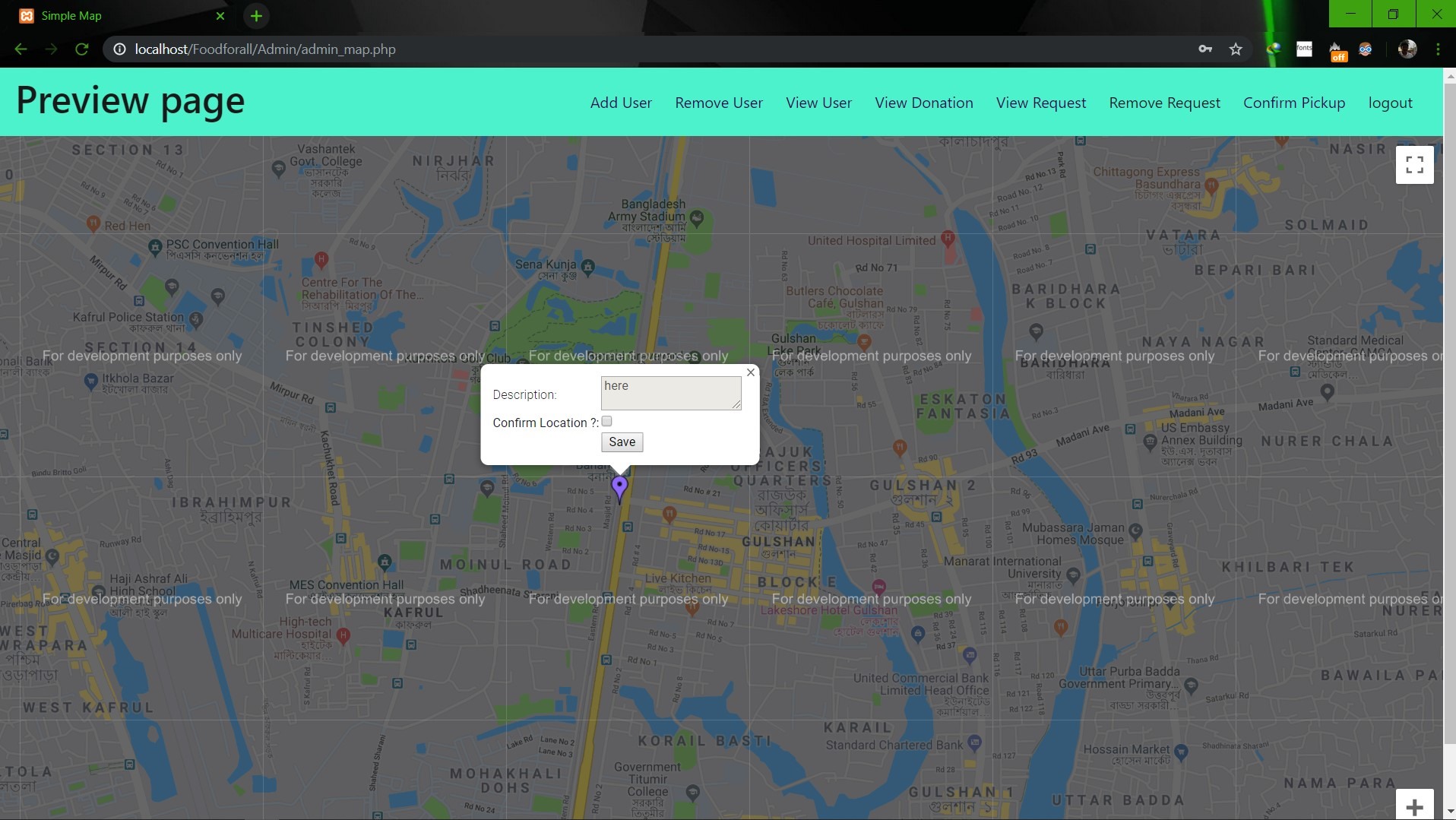

# SU19CSE299S02G05 <p align="center"> <img width="200" height="200" src="https://media.licdn.com/dms/image/C560BAQEFJPl7DXD1Dg/company-logo_200_200/0?e=2159024400&v=beta&t=4wzyvb7GBsvMovoet_LGS9uj_Gso_kmfWqCXnqydCDI"> </p> <h1 style="text-align: center">         North South University</h1> #        Project Name: Stop Food Waste **                      CSE299: JUNIOR DESIGN** **                        SEC: 02, Group: 05** **                Instructor:** **SHAIKH SHAWON AREFIN SHIMON (SAS3)** **                      Semester:** **Summer 2019** <br> **                        GROUP MEMBERS**                        1. **Animesh Mondol**                          **ID: 1611971042** **                   Email: animesh.sarkar02@northsouth.edu**                        2. **Shamsunnar Sumi**                          **ID: 1621762042**                    **Email: shamsunnar.sumi@northsouth.edu**           **GitHub Repository Link:** **https://github.com/AnimeshMondol/SU19CSE299S02G05NSU**                       **Date Prepared: 19/06/2019** <br><br><br><br><br> **Project Details:** Our project idea is Stop Food Waste. In our country during different program there are some large amount of food are being wasted. So we want to make a web app where people can donate that food to the poor needy people. With this web app we are trying to solve the problem of food faced by a certain amount of people in our country. We also want to use mobile phone access to the users so that they can use mobile phone to access the web page. **Features:** <br> **Login:** The system provides security features through email-password matching where only authorized user can access the system. **Admin Login:** In this part the manager will keep up the donated elements and donor details. He/ She will be able to know all the information and edit them. He/ She can assign people where to pick up the food from which will be shown in Google Map. 1. Add user 2. Remove user 3. View user 4. View request 5. Remove request 6. View donation 7. Confirm pickup location 8. Logout **User Login:** In this part user will be able to login and he/she will be able to see all the donor and there will be an option where user can be a donor. He/ She can also see the place in the map where to go, to pick up the food. **User Login:** 1. Donate food 2. Sign In 3. Become a donor 4. Send request 5. View request 6. About us 7. Contact us 8. Logout **Donate food:** In this part user can donote food by seeing the request id send by the other user. It will also contain a form where donor needs to add his name, mobile, email, req_id, quntity. By submitting the form it will take it to the user map for setup the location in the map. **Request for food:** In this part user can request for food to the website so that other user(donor) can donote food to them for donotion. **Donote Us** In this part user will have a option to donote us money if they want for the development purpose. In this part there will be Bkash and Rocket no where donors can donate us. It will contain a form where name,mobile,amount and transaction id will be asked to stored on DB. **View requests** Here user can see the pending requests for food. **About Us:** In this section there will be information about the program. **Contact Us** In this part there will be information about how to contact us and also there will be a part where user can poot comments and ask for help directly to the admin. **Technology:** HTML, PHP, CSS, Bootstrap template, My SQL Server, Google Map API. **Business Plan:** It is mainly a free to use for everyone. There will be no need for any amount of money to create an account in this webpage. But through Google AdSense we want to monetize the webpage. Also if any donor wants to donate some amount of money they can do it through Bkash , Rocket . <br><br> **Design:** We used the template of Bootstrap containing all the CSS and JS files downloaded from their website. We don't use any extra design in the webpage. But we used some image files to make the website look a little good. **Planning:** After selecting the project, we started our work by creating a UML diagram to make our work easy and it helped us to understand what we need to add or not in our website. Then we created issues in the project board. Then by weekly submission we tried to solve those issues. The project contains total of 43 closed issues which was used to make this website. All the details are shown in the project bord https://github.com/AnimeshMondol/SU19CSE299S02G05NSU/projects/1 **What did/didn't work:** Around 85-90% of our project run's very well. But we faced some problems. They are: 1. As we were unable to constract the foregin key in the DB after login the user need to input his name, mobile no , email and other informations manually. 2. For the donate us page under user, we didn't find any proper solution on how we can give the user the confermation about if his donation is received or not. So we manually take the name , mobile , the amount of money he donated and transaction id and store in the DB. 3. The admin map has some bugs that we were unable to fix. It doesn't refresh after the pickup confermation was done by the admin. 4. We wanted user to make the pickup request from his/her phone but we didn't able to make the website suitable for phones. **Screenshots:**                         **Image: DB(Foodforall)** <br>                          **Image: Homepage** <br>                          **Image: Login Page** <br>                         **Image: Join us Page** <br>                         **Image: Donor Login Page** <br>                         **Image: Food Donation** <br>                         **Image: User map** <br>                         **Image: Admin Login** <br>                         **Image: Admin Home** <br>                         **Image: Admin map** <br><br><br> **Conclusion:** 1. First of all we learnt how to use Github. It was completely new for us. But we now know how to use it. 2. We learnt about PHP, HTML and How to create DB connection in Mysql to create a project. 3. If we have more time we may be able to make the full project work properly. 4. In future, if we get chance we also want to create a android app for this weabsite. **References:** 1.https://getbootstrap.com/docs/4.3/examples/starter-template/ 2.https://www.w3schools.com/ 3.https://www.youtube.com/ 4.https://stackoverflow.com/questions/22138746/php-form-not-inserting-into-mysql-database 5.https://www.google.com/search?q=html+color+picker&oq=html+&aqs=chrome.0.69i59j69i57j69i60j69i65l2j69i60.3167j0j7&sourceid=chrome&ie=UTF-8 6.https://www.geeksforgeeks.org/ 7.https://www.youtube.com/watch?v=q2VV3-yWupU 8.https://bitbucket.org/webeasystep/markers_manager_php_mysql/src/master/

pointofsale

Mongo db console commands //showing the existing dbs.. show dbs //use test switching to db test, (only creating it when actually adding new data) //prompts the name of the working db now db //the fllw would prompt the count(), in the link2 collection, in the current db... >db.links2.count() //inserting a record in links2 db.links2.insert({title:"unn titulo", url:"", comment:"", tags:["un primer tag", "un segundo tag"], saved_on: new Date}) //working with an object the javascript way... data = {} | data.title = "un titulo" | data.tags = ["un tag", "otro"] | data.meta = {} | data.meta.OS = "win7" | db.links2.insert(data) //printing the result of the find, in the structured json format. db.links2.find().forEach(printjson) //--> in this case we pass to forEach the printjson function... //retriving only the first of the results of the find method. db.links2.find()[0] db.links2.find()[0]._id //getting the timestamp present in the _id variable (is made of (also) the time it was created) db.links2.find()[0]._id.getTimestamp() /*the following function creates, when called, a new collection inside the same working db, that tracks the last id number we are in. This allows having the same behavieur than in relational DBs.*/ //apparently, u have to declare this function... function counter(name) { var ret = db.counter.findAndModify({query:{_id:name}, update:{$inc:{next:1}}, "new":true, upsert:true}); return ret.next; } //so u can do something like db.products.insert({_id:counter("products"), nombre:"primer nombre"}) //the result is something like: { "_id": 1, "name": "un producto" } { "_id": 2, "name": "otro producto" } /*referencing in MongoDB*/ db.users.insert({name:"Richard"}) var a = db.users.findOne({name:"Richard"}) db.links2.insert({title:"primer titulo", author:a._id}) //reference to other collection throught the _id key... //quering db.users.findOne({ _id:link.author }) //a way to make manual inner joins... within the user db, we search for a coincidence of our _ids on the links2 db, author field. ---note--- embedding is much more efficient when we have significantly more read than writes. Otherwise, consider using the normalized way. These depends on every case. /**/ #importing data from a .js in json format. With mongod running or in a services: > ../../../mongodb/bin/mongo 127.0.0.1/bookmarks bookmarks.js //the first part is the location to the mongo exe in the mongo usual location //the second part is the server and db in which we will be importing in //the third part is the file with all the mongo commands... --this bookmarks file is in C:\Tuto\mongo\trying -- https://raw.github.com/tuts-premium/learning-mongodb/master/08%20-%20bookmarks.js /*bookmarks.js extract*/ var u1 = db.users.findOne({ 'name.first': 'John' }), u2 = db.users.findOne({ 'name.first': 'Jane' }), u3 = db.users.findOne({ 'name.first': 'Bob' }); db.links.insert({ title: 'Nettuts+', url: 'http://net.tutsplus.com', comment: 'Great site for web dev tutorials', tags: ['tutorials', 'dev', 'code'], favourites: 100, userId: u1._id }); /**/ //connecting directly to db bookmarks > ../../../mongodb/bin/mongo bookmarks //searching in the collection all docs that have inside the tags array the "code" element. //this can be done because we are dealing with an array --> array advantages... db.users.find({tags:"code"}).forEach(printjson) //with findOne u can do (not with find) findOne().name db.links.find({favourites:100}, title:true, url:1) //selecting only some fields... db.links.find({favourites:100}, tags:0) //selecting all but the tag field... //selecting inside an object... db.users.findOne({"name.first": "John"}) db.users.findOne({"name.first": "John"}, "name.last":1) var john = db.users.findOne({"name.first": "John"}) db.links.find({userId:john._id}, {title:1, _id: 0}) /*queries directives*/ //greater than 150 db.links.find({favourites:{$gt:150}}, {_id:0, favourites:1, title:1}).forEach(printjson) db.links.find({favourites:{$gt:150}}, {_id:0, favourites:1, title:1}).count() //less than db.links.find({favourites:{$lt:150}}, {_id:0, favourites:1, title:1}).forEach(printjson) //$lte, $gte -- and iqual //using in db.users.find({"name.first":{$in:["John", "Jane"]}}) //the opposite is $nin db.users.find({"name.first":{$nin:["John", "Jane"]}}) //$all -- only the records with all the specifications in "tags" field. db.links.find({tags: {$all:["code", "marketplace"]}}, {title:1, tags:1, _id:0}) //$ne -- not equal //the $or flag search for the fullfillment of at least one of the elements in the array passed... db.users.find({$or: [{"name.first": "John"}, {"name.last": "Wilson"}]}) //the opposite: $nor //inclusive: $and //$exists db.users.find({email: {$exists: true}}) //$mod db.links.find({favourites: {$mod: [5, 0]}}, {_id:0, title:1, favourites:1}) db.links.find({favourites: {$not: {$mod: [5, 0]}}}, {_id:0, title:1, favourites:1}) //elemMatch -- inside logins, search for an element match that has minutes = 20, and return the complete record db.users.find({logins: {$elemMatch: {minutes: 20}}}) //searching for an 'at' prior to 2012/03/30.. and returning the whole record... db.users.find({logins: {$elemMatch: {at: { $lt: new Date(2012, 3, 30)}}}}) //using where -- c) is equivalent to a) a) db.users.find({ $where: 'this.name.first === "John"'}) b) db.users.find({ $where: 'this.name.first === "John"', age:30}) c) db.users.find( 'this.name.first === "John"') //injecting functions in mongodb -- as this example returns trueéfalse, its going to return values randomly var frand = function() {return Math.random() > 0.5} db.users.find(frand) // var f = function() { return this.name.first === "John"} db.users.find(f) //or db.users.find( {$where: f} ) //other queries //distinct -- returns a list of diff results db.links.distinct('favourites') --> [100, 32, 21, 78, ...] db.links.distinct("url") db.links.group({ key:{userId : true}, initial:{favCount: 0}, reduce: function (doc, o) {o.favCount += doc.favourites}, finalize: function(o) {o.name = db.users.findOne({ _id: o.userId}).name } }); *** //the final part is not working... db.links.group({ key:{userId : true}, initial:{favCount: 0}, reduce: function (doc, o) {o.favCount += doc.favourites} }); db.links.group({ key:{userId : true}, initial:{favCount: 0}, reduce: function (doc, o) {o.favCount += doc.favourites}, finalize: function(o) {o.name = "richard"}} ); //regex db.links.find({ title: /tuts\+$/}) db.links.find({ title: {regex: /tuts\+$/}}, {title:1}) //counting db.users.count({'name.first': 'John'}) db.users.count(); //all users in the collection //sorting, limit db.links.find({}, {title:1}).sort({title:1}).limit(1) //1: asc -1: desc //sorting, skipping and limiting... normal behavieur in the pagination rutine... db.links.find({}, {title:1, _id:0}).sort({title:1}).skip(3).limit(3) /*updating*/ //by replacement or by modification... ---general form /* db.collection.update( <query>, <update>, { upsert: <Boolean>, //if not found insert multi: <Boolean>, //change in all the condition <query> is fullfilled } ) */ // more info in http://docs.mongodb.org/manual/reference/method/db.collection.update/ db.users.update({-the query object-}, {-the update object-}, -upsert boolean-); var n = {title:"Nettuts+"} db.links.find(n, {title:1}) db.links.update(n, {$inc: {favourites: 5}}) var q = {"name.last": "Doe"} db.users.find(q, {name:1}) //we can use set to update a field or add a completly new one... db.users.update(q, {$set: {"name.last": "Doetix"}}) //modifying an existing field.. db.users.update(q, {$set: {"email": "doetix81@gmail.com"}}) //inserting a new one... //to remove a field w use unset db.users.update(q, {$unset: {job: "Web developper"}}) db.users.update({"name.first":"John"}, {$set: {job:"Web developer"}}, false, true) //modifying and then inserting an object var bob = db.users.findOne({"name.first":"Bob"}) >bob { "_id" : ObjectId("525f06242df9763abe646b62"), "name" : { "first" : "Bob", "last" : "Smith" }, "age" : 31, "email" : "bob.smith@gmail.com", "passwordHash" : "last_password_hash" } > bob.job = "Thick Brush Painter" > db.users.save(bob) //find and modify -- findAndModify {{}} /* The findAndModify command atomically modifies and returns a single document. By default, the returned document does not include the modifications made on the update. To return the document with the modifications made on the update, use the new option. { findAndModify: <string>, query: <document>, sort: <document>, remove: <boolean>, //one of | update: <document>, //this two | new: <boolean>, //if the new object must be shown or the old one.. fields: <document>, //fields to show in the result upsert: <boolean> } */ > db.links.findAndModify({ query:{favourites: {$gt:150}}, sort:{title:1}, update:{favourites: 333}, new: true, fields: {_id:0} }); //pulling into arrays db.links.update(n, { $push: {tags: "jobs"}}) > db.links.findOne(n).tags //several... db.links.update(n, {$pushAll:{tags: ['blogs','press','contests']}}) //on pull into the array if the new element is not present.. db.links.update(n, {$addToSet:{tags: "dev"}}) //doing the same with an array... db.links.update(n, {$addToSet:{ tags:{$each: ["dev", "interviews"]} }}) //pulling out content from the array... db.links.update(n, {$pull: {tags:'interviews'}}) //pulling several... db.links.update(n, {$pullAll: {tags: ['blogs','dev', 'contests']}}) //poping out from the beginning or the end.. db.links.update(n, {$pop: {tags: 1}}) //--from the end (-1 -- from the beginning) //positional operator... only the subobject gets updated... db.users.update({'logins.minutes': 20} , {$inc:{ 'logins.$.minutes': 10}}, false, true) db.users.update({'logins.minutes': 20} , {$set:{ 'logins.$.location': 10}}, false, true) db.users.update({'logins.minutes': 30}, {$set: {random: true}}, false, true) //renaming the fields name... db.links.update({url: {$exists: true}}, {$rename:{"url": "camino"}}, false, true); //more info on the positional operator in: http://docs.mongodb.org/manual/reference/operator/update/positional/ //taken from there: /* The positional $ operator facilitates updates to arrays that contain embedded documents. Use the positional $ operator to access the fields in the embedded documents with the dot notation on the $ operator. db.collection.update( { <query selector> }, { <update operator>: { "array.$.field" : value } } ) */ /***EXAMPLE Consider the following document in the students collection whose grades field value is an array of embedded documents: { "_id" : 4, "grades" : [ { grade: 80, mean: 75, std: 8 }, { grade: 85, mean: 90, std: 5 }, { grade: 90, mean: 85, std: 3 } ] } Use the positional $ operator to update the value of the std field in the embedded document with the grade of 85: db.students.update( { _id: 4, "grades.grade": 85 }, { $set: { "grades.$.std" : 6 } } ) ***/ //removing db.users.remove({'name.first': "John"}) //all the collections in the selected db... show collections //dropping completly a collection... db.acoll.drop() //indexes... db.links.find().explain db.links.ensureIndex({ title: 1}) //in ascending order.. in mainly important in cpompund indexes.. //a reflect of this index can be found in that db indexes collection db.system.indexes.find(); //u cound put an index to a canging value, but every time u change that value the index must be updated. keep in mind. //usually is a good idea to set the indexes at the beginning when no data is present in the collections. However, u could use the following formula to treat duplicates and unique data //keeping only the first one, deleting the others.. db.links.ensureIndex({ title: 1}, { unique: true, dropDups: true}) //when considering the case of some of the documents without the idexed field, to save mongo from storing space for this index if the field itself has not been inserted: db.links.ensureIndex({ title: 1}, {sparse: true}) //its important to think of the compund index as a nested one, an index of an index. Its related to each problem-case. Like in the case of the recepies: indexing first the ingredient and the the recepie, makes more sense than indexing in reverse. Its all related on how u are going to search. db.links.ensureIndex({ title: 1, url: 1}) //this one means that u can search on title; or on title and url... db.links.ensureIndex({ a: 1, b: 1, c: 1}) //searches are possible on a; a, b; a, b, c //deleting indexes db.links.dropIndex("title_1"); //the same way that appears in system.index collection... /*concepts to follow*/ //Sharding and Replica Set... http://www.slideshare.net/Dataversity/common-mongodb-use-cases-13695677 http://docs.mongodb.org/ecosystem/use-cases/product-catalog/ db.collection.update({"grades.grade":80}, { $set: {"grades.$.std": 18}})

johndowns

Code for 'Cosmos DB Server-Side Programming with TypeScript – Part 3: Stored Procedures'

7erry

HazelCast Jet and IMDG with mySQL Source w/ CDC updates via Kafka-Connect Debezium connector for a scenario with batch and real time updates of securities data in mySQL In this example we want to test out both real time and batch feeds to IMDG and the write-thru' and read thru' capabilities for Hazelcast IMDG and the data pipelining via Hazelcast Jet working against an RDBMS SOR. The RDBMS can be updated by many apps and we want to demonstrate CDC capabilities via Kafka using Debezium CDC MySQL connectors to refresh the IMDG asynchronoulsy and also synchronously via a client app. Setup is with docker-compose with 7 containers: 1 Lenses.io container having single node Kafka/Schema registry/kafka-connect/Kafka REST server + Kafka Management UI 2 Hazelcast Jet/IMDG cluster node containers (hazelcast1, hazelcast2) 1 Hazelcast Jet/IMDG container used for submitting jet jobs (hz_jet_submit) 1 Hazelcast IMDG management center container (mancenter) 1 Hazelcast Jet Management center (hz_jet_mancenter) 1 Mysql source (SOR) DB container (mysql) - this will be our SOR for the demo Use docker-compose to fire up all the above containers: docker-compose -f hazelcast-jet-ent-docker-compose.yaml up -d We get real-time and historic stock market data from Alphavantage Inc. APIs for daily OHLCV (open/high/low/close/volume) for the past 20 years (1999-2019) for 30 stocks in Dow Jones Industrial Average as well as real time data in 1 minute increments for the same stocks over a 7 day window. MySQL container has folder /scripts for data loading which create a securiries_master database and populate tables with stock data above as well as reference data on all S&P 500 stocks. In order to help with editing/debugging of DB scripts without having to respin the containers every time and to persist data locally on host, mysql scripts, conf, and data folders are mapped to a host volume via docker-compose. To run the data loading scripts inside the container: hazelcast-kafka-cdc-test $ docker exec -it mysql bash root@ee8690b41e43:/# /scripts/init-create-load-databases-tables.sh Kafka container has a scripts folder with the configurations for Kafka-connect Debezium connector to mySQL to handle CDC updates from the mySQL source DB. To configure the Debezium connector go to lenses UI on kakfa container by navigating to: http://localhost:3030 (username: admin, password: admin) To configure kafka-connect mySQL debezium connector, navigate to Connectors -> Create New Connector -> Choose "CDC for MySQL" and copy and paste the properties (uncommented part of the file) from the file in the kafka-scripts/debezium-mysql-connector.properties into the UI. The connector should startup and create several topics (one for capturing database wide DDL events, one topic per table for update events for each table). In order to help with editing/debugging of scripts or config files without having to respin the containers every time and to persist data locally on host, scripst and config files are mapped to a host volume via docker-compose. Hazelcast management center is at: http://localhost:8080/hazelcast-mancenter/login.html Setup a login and verify that the cluster hz-jet-ent-cluster is operational with 3 Jet/IMDG nodes (running on ports 5701, 5702, 5703 of the host) Hazelcast Jet management center is at: http://localhost:9090 Use default credentials (admin/admin) to login and and verify that the cluster hz-jet-ent-cluster is operational with 3 Jet/IMDG nodes (running on ports 5701, 5702, 5703 of the host) There is also a test hazelcast jet job provided as a maven project in the kafka2imap folder tree in the repo. This will read the topic "sp500_stocks" and write to a Hazelcast IMap called "securities_,master.sp500_stocks". To build the shaded (fat) jar to submit this job to jet run mvn clean package on the provided pom.xml file in the kafka2imap project folder. This will create a jar with all dependencies included (note: In order to use the jet built in wrapper script to submit jobs from commandline to the ject/IMDG cluster we need to package all dependencies and submit them together or add the dependecies to classpath as part of job submission as there is no guarantee that dependencies will be available on all the nodes in the grid) In order to help with editing/debugging of scripts or config files without having to re-spin the containers every time and to persist data locally on host there is a job-jars folder for job artifacts and a resources folder which has all the script and config files mapped to a host volume via docker-compose. To submit jobs to jet using the submit commandline utility, we have a convenience wrapper script that is run inside the hz_jet_submit container as follows: hazelcast-kafka-cdc-test $ docker exec -it hz_jet_submit bash bash-4.4# pwd /opt/hazelcast-jet-enterprise bash-4.4# cd job-jars/ bash-4.4# ls kafka2imap-1.0-SNAPSHOT.jar run-kafka2imap-job.sh run-wordcount-job.sh test.out bash-4.4# ./run-kafka2imap-job.sh Verbose mode is on, setting logging level to INFO Submitting JAR './job-jars/kafka2imap-1.0-SNAPSHOT.jar' with arguments []

trflorian

A tiny web server using Python and FastAPI to wrap part of the REST API of Firebase Realtime DB.

samuelnoye

In this project, you will extend the Personal Budget API created in Personal Budget, Part I. In the first Budget API, we did not have a way to persist data on the server. Now, we are going to build out a persistence layer (aka a database or DB) to keep track of the budget envelopes and their balances. You will need to plan out your database design, then use PostgreSQL to create the necessary tables. Once your database is set up, connect your API calls to a database. Once you’ve added and connected your database, you will add a new feature to your server that allows users to enter transactions. This feature will put your envelope system into action! Finally, you will make your API available for others to use by documenting it with Swagger and deploying it with Heroku.

HsKA-OSGIS-archive

YOUR FIRST STEP: ----------------------------------------------------------------- A Web based sharing of Geo-Information in regard to solve the initial problems of newcomer international student in Karlsruhe Concept: As there are many reputed educational institute like KIT, HSKA situated in Karlsruhe, so many international students have come in previous years and the numbers are increasing year after year. The main problem has been notice here is insufficient of relevant information in their initiation stage in Karlsruhe. Often it has been asked by international students, that where they find a room in Karlsruhe or Rothaus or Mensa? And how they could reach their? Definitely Studentwerk, ASTA etc. are available to help those students, but the fact is most of them do not know where exactly the Studentwerk or ASTA offices are actually situated. So when they come here for study they should have an idea about their first few steps before beginning their Study. The main goal of this project is to provide a dynamic web based solution of those problems. So that every student could understand and able to go through their initiation stage in Karlsruhe by their own. And that is why the name of this project is ‚YOUR FIRST STEP‘. Target Group: All newcomer international students in Karlsruhe. Area of Interest Karlsruhe, Germany Developer: ------------------------------------------------------------- Md. Shamimul Islam Fickrie Muhammad Murshed Alam All are MSc. Students in International Masters Geomatics, University of Applied Science Karlsruhe, Germany. How to load: ------------------------------------------------------------ Client Side: - Clone this repository into your local - Copy the yourfirststep folder in to your c:\xampp\htdox\xampp drive - Then the index.php file will be visible in the browser http://localhost/xampp/yourfirststep (but in server side a database is must to get all function active: see server side part) - Now you can edit or develop more on it to improve the application. - HTML5 use to develop the web page, Java Script is use to display the map and PHP use to connect the database with website and for query. - For individual service page code will found in service folder. Connection: - config.php file has been created to make the connection. Connection parameters are server name, user name and password like "localhost","root","" and database name is "your_firststep". " $connect = mysql_connect("localhost","root","") or die("Couldn't connect with Database. Please, try again!"); mysql_select_db("your_firststep") or die("Couldn't find this Database!"); " Server Side: - Install XAMPP if you do not have one (https://www.apachefriends.org/de/download.html) - Create a database with a name your_firststep and import the your_firststep.sql file in side the Database folder, this database is connected via php with the website to display and store comments of the clients - PostgreSQL database hosting different tables that store geometry data (‘features’) corresponding to the different ‘layers’ that are displayed in the ‘pgrouting' client - pgRouting extension that has been added (by default) to PostgreSQL database. The ‘ways’ table is pgRouting geometry (the_geom) enabled which is returned to the client application (‘Your First Step') via GeoServer - GeoServer is the WMS service provider. It exposes the different PostgreSQL tables to the ‘Your First Step' application via webservice calls via http://localhost:8082 Data Preparing: - The geo data can be downloaded from OSM Metro Extracts (http://mapzen.com/metro-extracts/) - PostGIS use to import osm2pgrouting - Add your layers to your PostGIS database - Publish layers from the PostGIS database on Geoserver - Create a workspace called "pgrouting" - Create a store called "pgrouting" PgRouting: Following steps have to follow to provide routing facilities to the cling. For more information you can use the following web links. http://download.osgeo.org/pgrouting/foss4g2010/workshop/docs/pgRoutingWorkshop.pdf http://workshop.pgrouting.org/chapters/php_server.html - Create database "routing" in PostgreSQL - Add postgis and pgRouting extension to the database (see workshop chapter 3.3) (-- add PostGIS functions CREATE EXTENSION postgis; -- add pgRouting core functions CREATE EXTENSION pgrouting;) - Create a connection between this database and QGIS (Add PostGIS Table in QGIS) - Follow the further instructions in the PgRouting workshop from FOSS4G (http://workshop.pgrouting.org/chapters/topology.html) with chapter 4 (Create a Network Topology) and chapter 5 (PgRouting Algorithms) - test the routable database (ways layer) with QGis by using pgrouting layer extension Final Output: www.your-firststep.de Contract: ------------------------------------------------------ For any kinds of further question, interest, problem or discussion please contract with - Md. Shamimul Islam - rubel_ku03@yahoo.com (www.rubelshorizon.com) Fickrie Muhammad - fickrie.muhammad@yahoo.com

johndowns

Code for 'Cosmos DB Server-Side Programming with TypeScript – Part 5: Building and Deploying'

johndowns

Code for 'Cosmos DB Server-Side Programming with TypeScript – Part 4: Triggers' (Post-Trigger)

johndowns

Code for 'Cosmos DB Server-Side Programming with TypeScript – Part 4: Triggers' (Pre-Trigger)

ShehanThamoda

Using Axon dependency and implemented the separate projects for each insertion/updating parts and data retrieving part. In here use the mongo db instead of Axon server for data storing part. Query Service using the Redis server to retrieving the data.

CodeBloodedMama

markedsplace app with Nodejs, Express, Postman, TypeScript, Nodemon, Containerisation, Docker, Rancher/DockerDesktop, , GraphQL, Apollo Server,RabbitMQ queues, brokers,microservices, , Kubernetes Basics part 2, app+db pod,

djanyreason

This repository is for exercises in Part 4 of Full Stack Open, "Testing Express servers, user administration". This repo contains a back-end server built using with NodeJS and Express, which connects to a MongoDB database. The server & DB manage a collection of "blog post" objects and user authentication information.

gj100596

The project is a part of the curriculum for the subject CS 744. Here we have a Server who has details of few movies stored in MySQL DB and its posters stored on File System. The Client can ask for details of any movie.

iharshbhavsar

Technologies: Html, CSS, Bootstrap, JavaScript, Pug, Mongo DB, Express JS, Angular JS, Node JS, Node Package Manager. • Created a complete working MEAN stack web application of Pharmacy Management with team members. • Made a website that can retrieve the data of various medicines with validations, manage the inventory and update it. • Made a requirement document with various needs for the project before implementing the code. • Created structure of a website and installed required libraries and modules. • Set up the Node JS and Express Js server to work with the back-end part of the project. • Created database and model in the cloud using Mongo DB with required validations. • Made a REST API to perform CRUD and various operation with the database and on the website. • Implemented front-end part of the website using Angular JS framework and its functions such as filters and services. • Styled website using Bootstrap

in this repo. i have made a multi-page react app where user can track and check the covid cases globally and for indian state also. user can check weather information of any location where he wants to travel and than according to that he can book the tickets. after booking the tickets booking details stored in dat5abase and also it will reflected to admin portal which i have made on other platform using laravel and than admin will contact you with the confirmation. in this application i am using some apis for covid and weather info which is a good part of this app and also i am representing the data in the form of charts and cards for good designs. booking form backend i am handling from php so may be it will not work as of now because it requires local server and database but i will make a solution for it soon and for now i will share the php and db script and info for that. this application is still on development stage.